A platform for building Transparent, Trustworthy AI solutions

Superintelligent empowers organizations to build AI that is fair, interpretable, auditable, and understandable.

Find out more

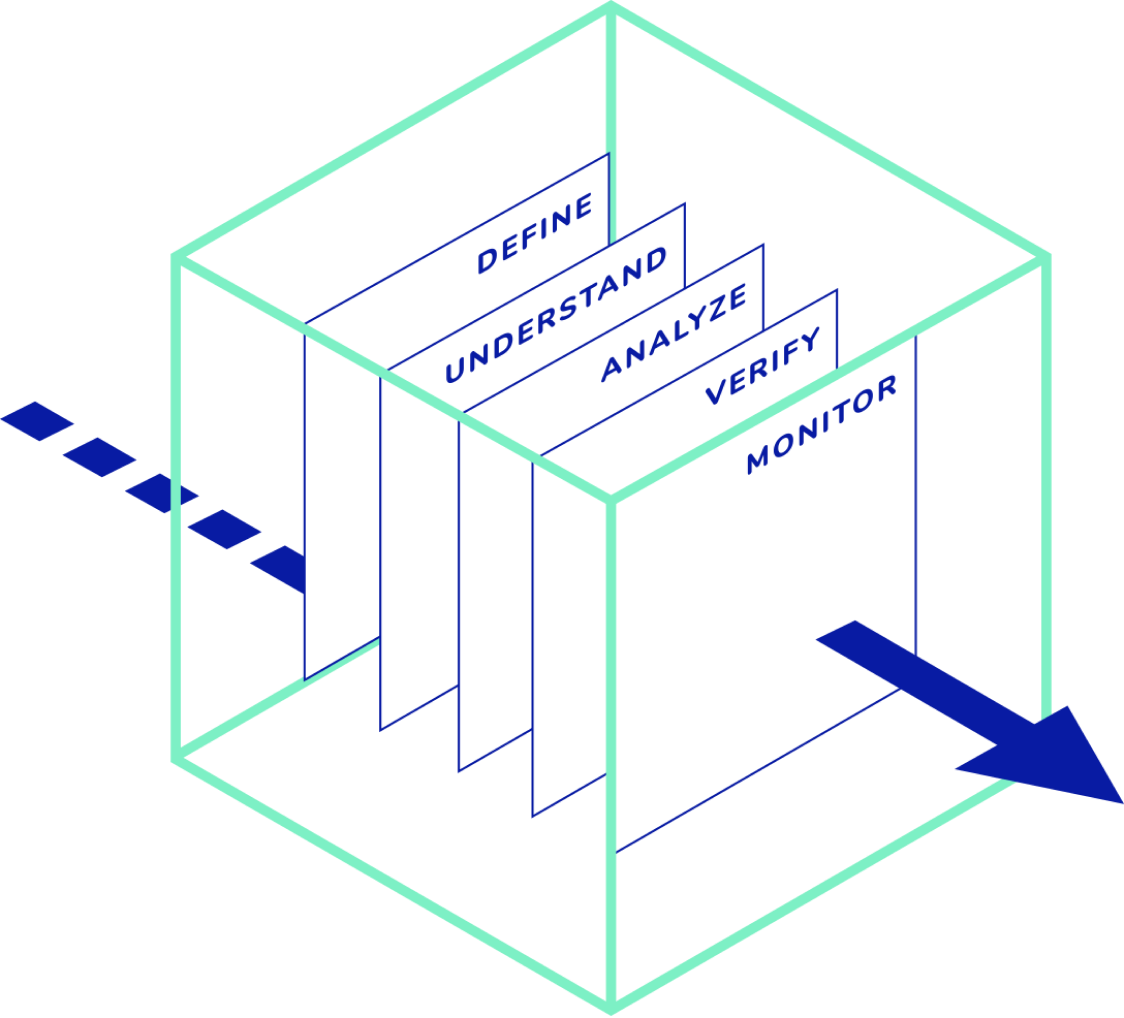

How Superintelligent works

Superintelligent works across stakeholders and systems throughout the AI development lifecycle by providing a platform to define, audit, and monitor AI solutions at scale, continuously.

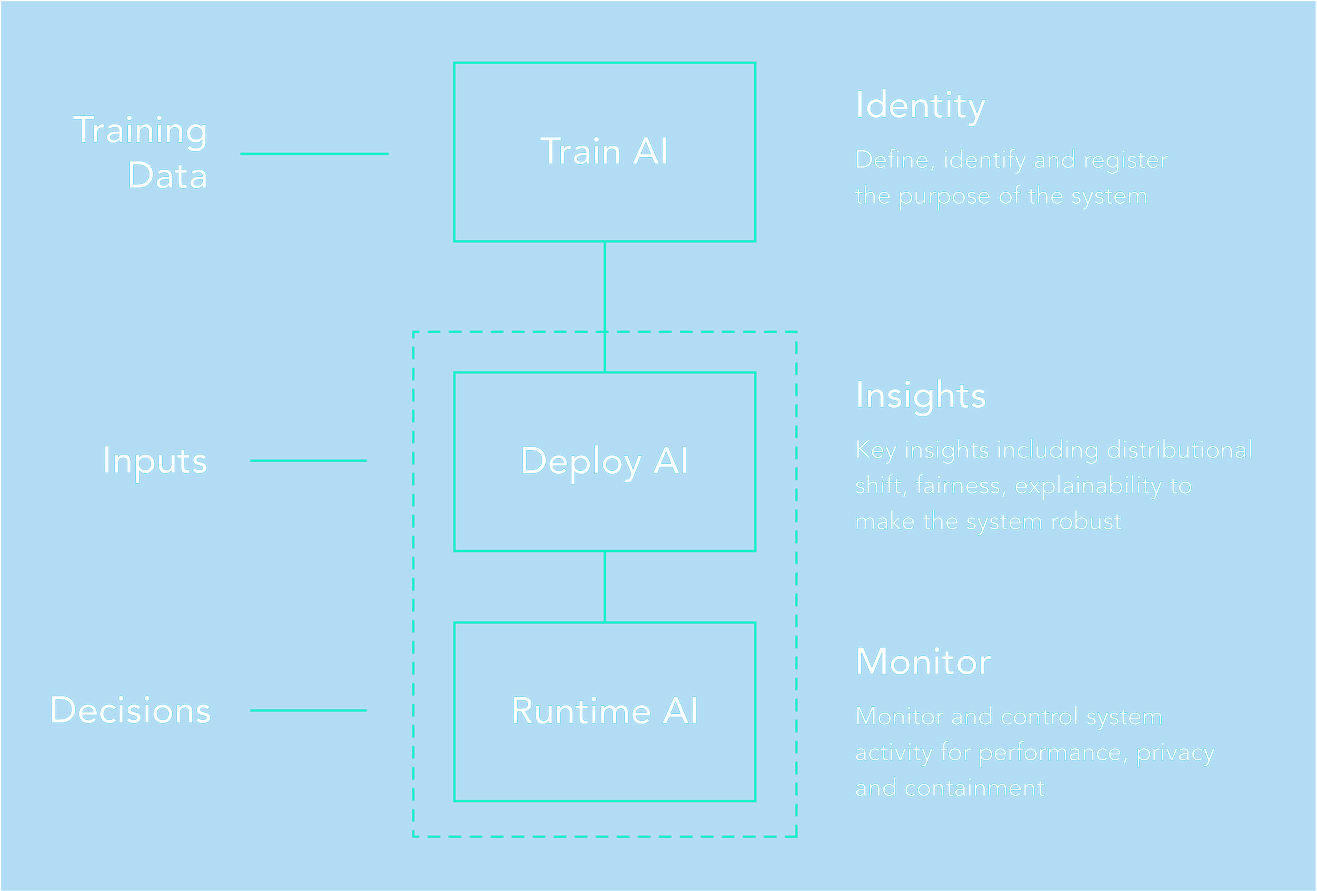

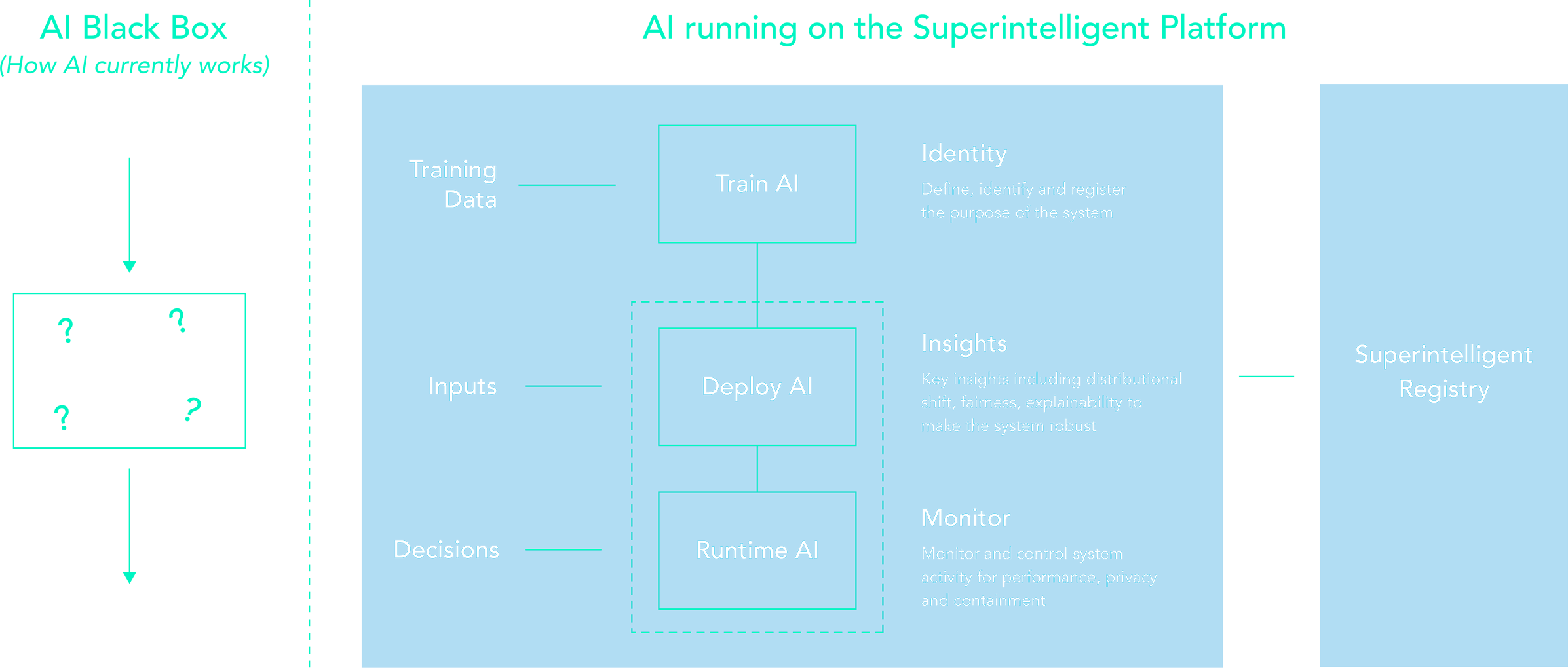

Identity

An industry-first open AI disclosures registry providing an organized, transparent AI informational repository.

Insights

A brand new platform to analyze models and data used in models to deliver human understandable AI outcomes.

Monitor

AI performance monitoring and auditing platform to empower building high performance, trustable, and compliant AI solutions.

SuperIntelligent Platform

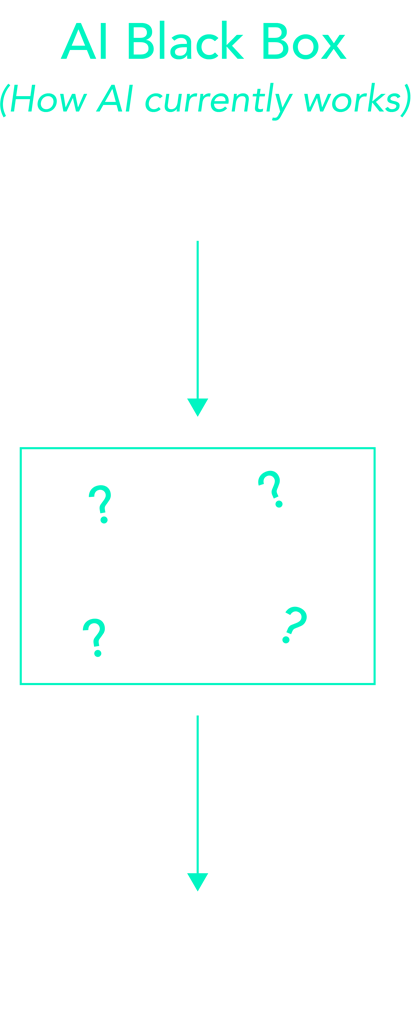

Demystifying the AI Black Box

Current AI systems are opaque, non-intuitive and difficult to understand. As a user/developer/business owner it's hard to answer even simple questions such as; Why did you do that? When do you succeed or fail? When do I trust you?

Superintelligent platform empowers AI systems development by providing specification, robustness, and assurance throughout the AI development life-cycle.

Build transparent, trustworthy, understandible AI solutions with Superintelligent

Our Story

The history of robotics and artificial intelligence in many ways is also the history of humanity's attempts to control such technologies. Numerous recent advancements in all aspects of research, development, and deployment of intelligent systems are well publicized but transparency, safety and security issues related to AI are rarely addressed. Our goal with what we are building is to enable businesses of all sizes to unlock this AI black box and deliver trustworthy AI solutions to their customers.

We created Superintelligent because we believe there is a need to provide a human friendly AI glass box platform that unravels and empowers organizations to build transparent, auditable, trustworthy and understandable AI solutions

Dr. Roman Yampolskiy

CoFounder, Superintelligent